LightingRL Series

Collection

Diffusion Large Language Models with a SOTA Accuracy–Parallelism Trade-off • 7 items • Updated • 2

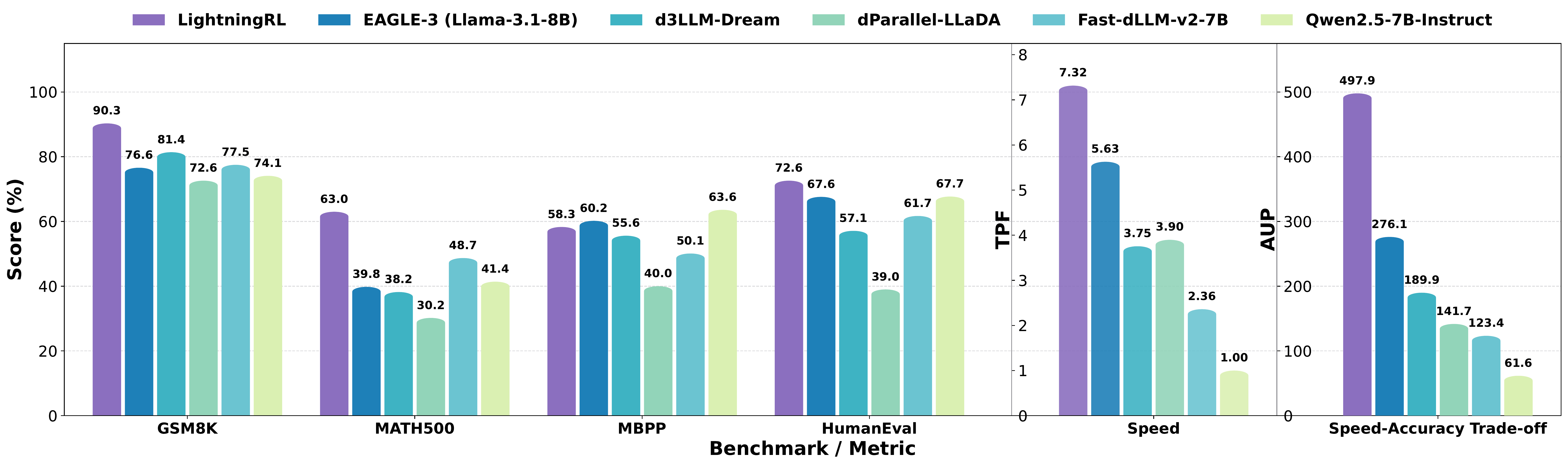

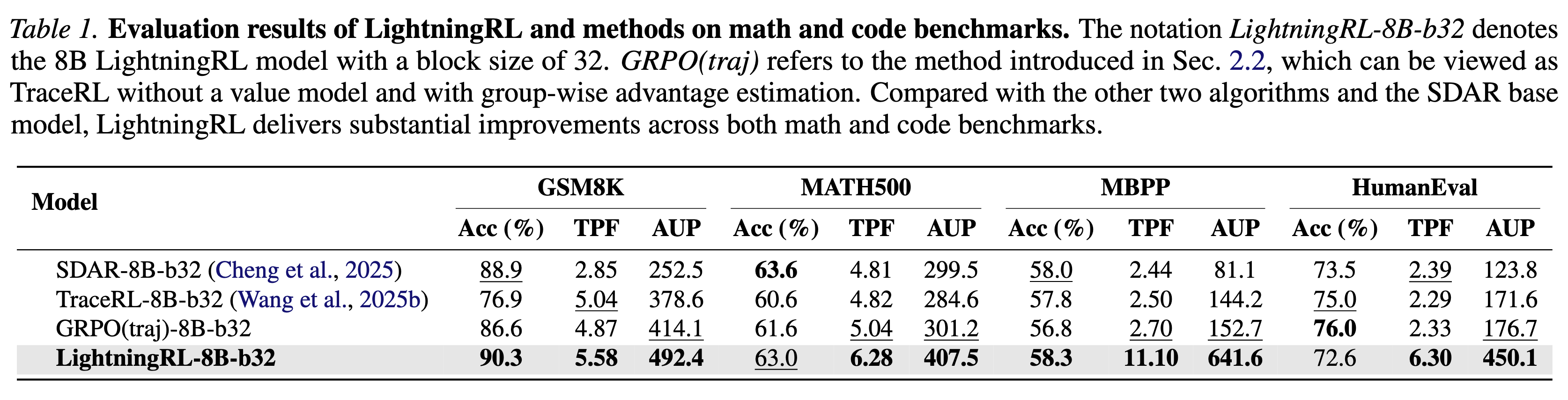

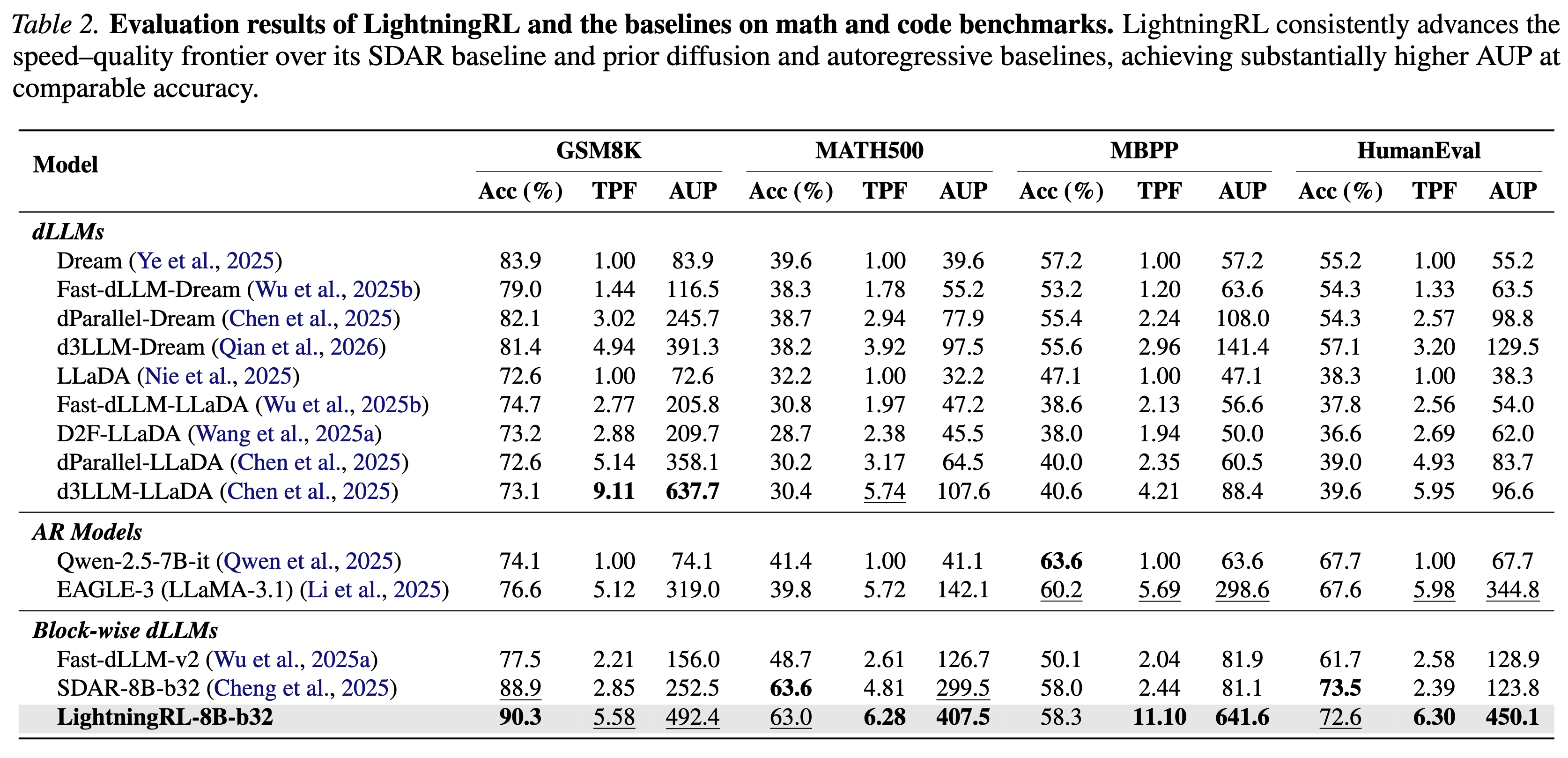

We introduce LightningRL, a reinforcement learning post-training framework for block-wise diffusion Large Language Models (dLLMs) that breaks the accuracy–parallelism trade-off. Applied to SDAR-8B, LightningRL achieves 7.32 average TPF and 497.9 AUP — simultaneously improving both generation quality and inference speed.

@misc{hu2026lightningrlbreakingaccuracyparallelismtradeoff,

title={LightningRL: Breaking the Accuracy-Parallelism Trade-off of Block-wise dLLMs via Reinforcement Learning},

author={Yanzhe Hu and Yijie Jin and Pengfei Liu and Kai Yu and Zhijie Deng},

year={2026},

eprint={2603.13319},

archivePrefix={arXiv},

primaryClass={cs.LG},

url={https://arxiv.org/abs/2603.13319},

}